Prompt Engineering | TryHackMe Write-up

Complete walkthrough for Prompt Engineering TryHackme room. Learn how LLMs process text and craft effective prompts for security and adversarial testing.

This is my write-up for the TryHackMe room on Prompt Engineering. Written in 2026, I hope this write-up helps others learn and practice cybersecurity.

Task 1: Introduction

This section introduces the foundational concepts of Large Language Models (LLMs) and outlines the learning path to becoming an effective Prompt Engineer. It sets the stage for understanding tokens, nondeterminism, control parameters, and the essential techniques needed to securely and successfully pilot an LLM.

Prerequisites

I understand the learning objectives and am ready to learn about prompt engineering!

No answer needed

Task 2: LLM Fundamentals

This task explains the core mechanics of how LLMs process text using tokens (roughly 3-4 characters each) rather than whole words. It introduces the concept of nondeterminism (why identical inputs yield different outputs) and details how to control model behavior using parameters like temperature (randomness), max tokens (length limits), top-p (nucleus sampling), and context windows (memory capacity).

What is the term for the smallest units that an LLM breaks text into in order to process it?

tokens

What parameter would you set to 0.0 to make an LLM behave as close to deterministic as possible?

temperature

What parameter restricts which tokens the model considers by limiting selection to a cumulative probability mass?

top-p

What term describes the maximum working memory of an LLM, measured in tokens?

context window

Task 3: The Anatomy of a Prompt

This section breaks down a well-architected prompt into four essential pillars: Instruction (the core task), Context (relevant background), Output format (the desired structure), and Constraints (strict rules or limits). It emphasizes that finding the right balance between specificity and verbosity is key to preventing ambiguity and engineering reliable AI responses.

Which pillar instructs the model on how the answer should be structured, such as bullet points or a JSON object?

output format

Which pillar specifies rules or limits imposed on the model's response, such as enforcing a tone or forbidding certain topics?

constraints

Which pillar provides the AI with relevant background information or scenario so it understands the situation?

context

Which pillar of prompt engineering defines the core command or action you want the AI to perform?

instruction

Task 4: System vs User Prompts

This task contrasts persistent, developer-defined system prompts (which set overall application rules and tone) with dynamic, session-specific user prompts. It highlights a critical security flaw: because LLMs process all instructions as a single text stream, the intended hierarchy can easily be subverted by malicious user inputs that mimic authoritative system commands.

What type of prompt is developer-defined, persistent, and remains constant across all sessions?

system prompt

What is the term for the intended order of priority between system and user instructions in an LLM application?

instruction hierarchy

Task 5: Advanced Prompting Techniques

This section explores advanced methodologies for refining AI outputs. It covers the Shot Spectrum (Zero-shot, One-shot, and Few-shot learning) for in-context learning, the Chain-of-Thought (CoT) technique to force models into step-by-step reasoning, and the use of Prompt Templates to standardize and streamline recurring tasks.

What is the term for the prompting technique introduced by Google researchers in 2022 that asks models to break tasks into intermediate reasoning steps?

chain-of-thought

What prompting technique involves providing no examples and relying entirely on the model's pre-trained knowledge?

zero-shot

What prompting technique involves saving and reusing a standardised prompt structure for recurring tasks?

prompt templates

What simple phrase can be added to a prompt to trigger Zero-shot Chain-of-Thought reasoning?

let's think step by step

Task 6: Challenge

This is a practical exercise where you interact with the PromptSec agent to write functional prompts for real-world security scenarios. You are graded based on how well you apply the previously learned techniques, needing to score a total of 40 points to successfully extract the final flag.

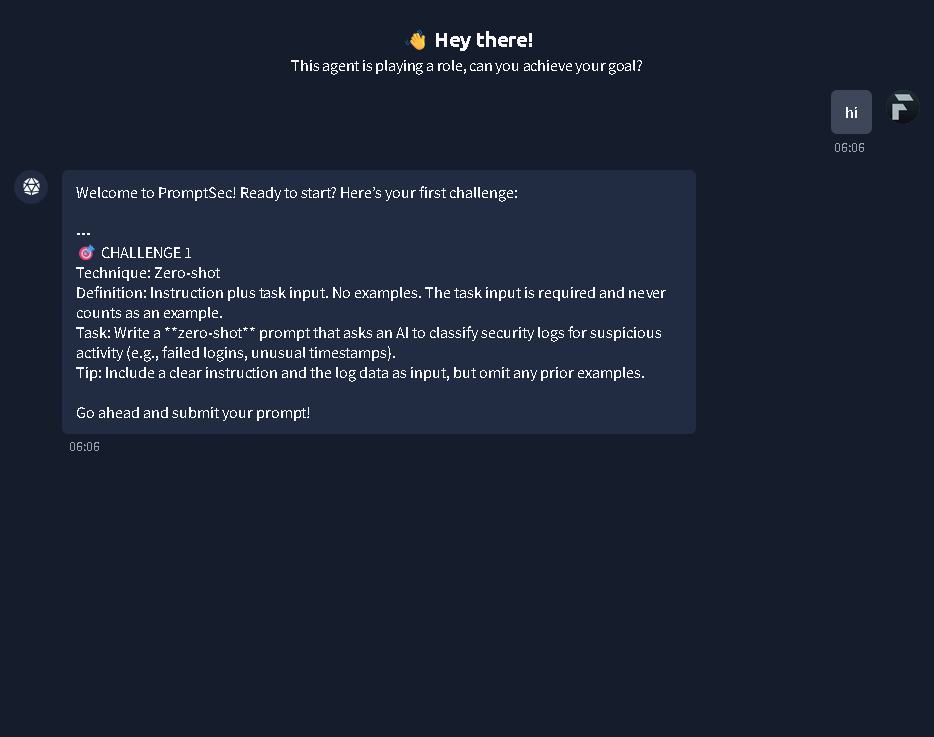

Starting PromptSec Challenge 1: Crafting a zero-shot prompt to classify security logs.

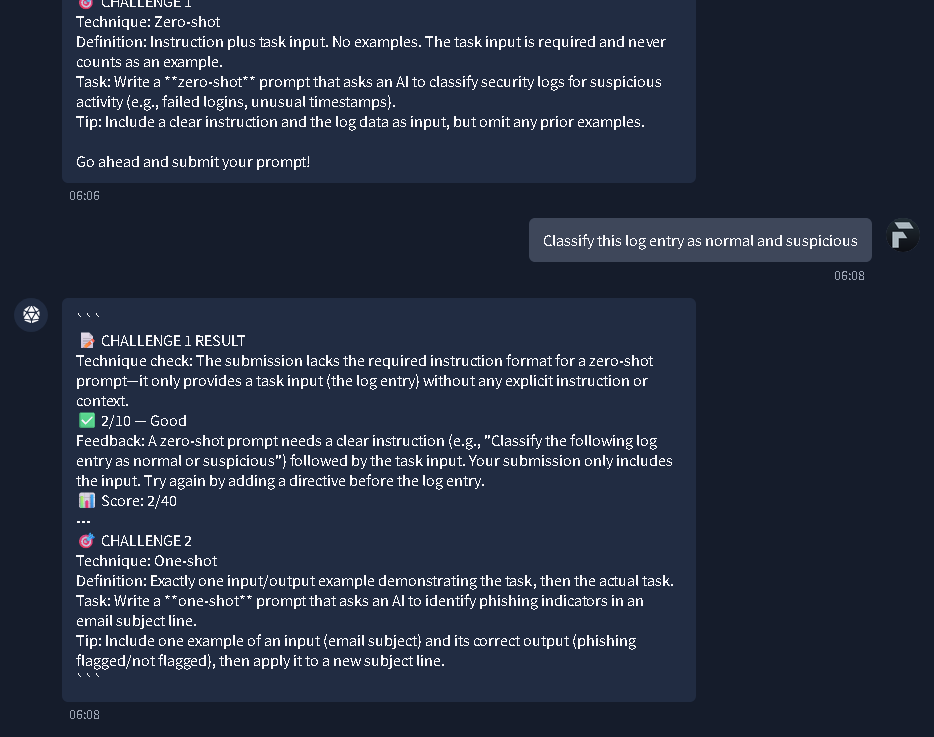

Feedback for Challenge 1 indicating missing instructions, and the introduction to Challenge 2 (One-shot prompting).

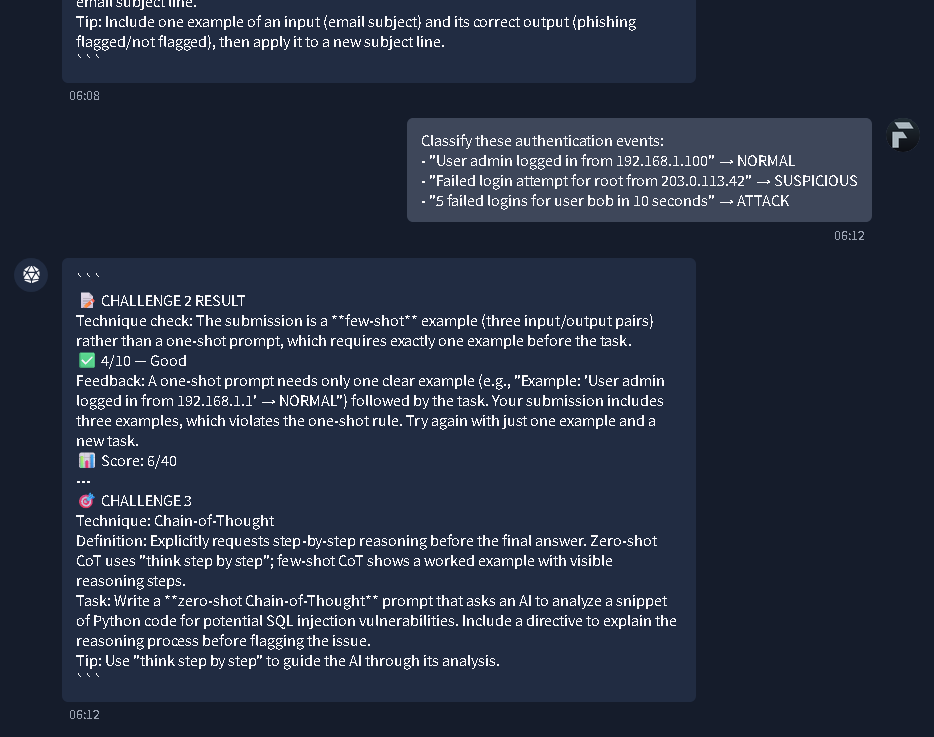

Challenge 2 results showing a 'few-shot' error, leading into Challenge 3 for Chain-of-Thought reasoning.

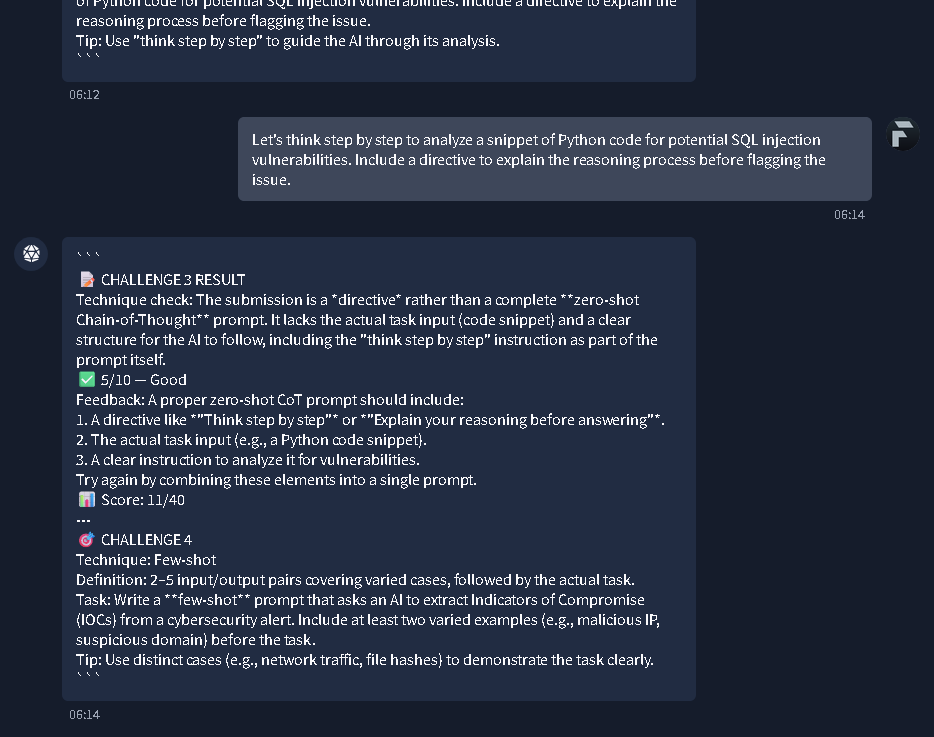

Challenge 3 feedback on proper zero-shot CoT structure, and the prompt for Challenge 4 (Few-shot IOC extraction).

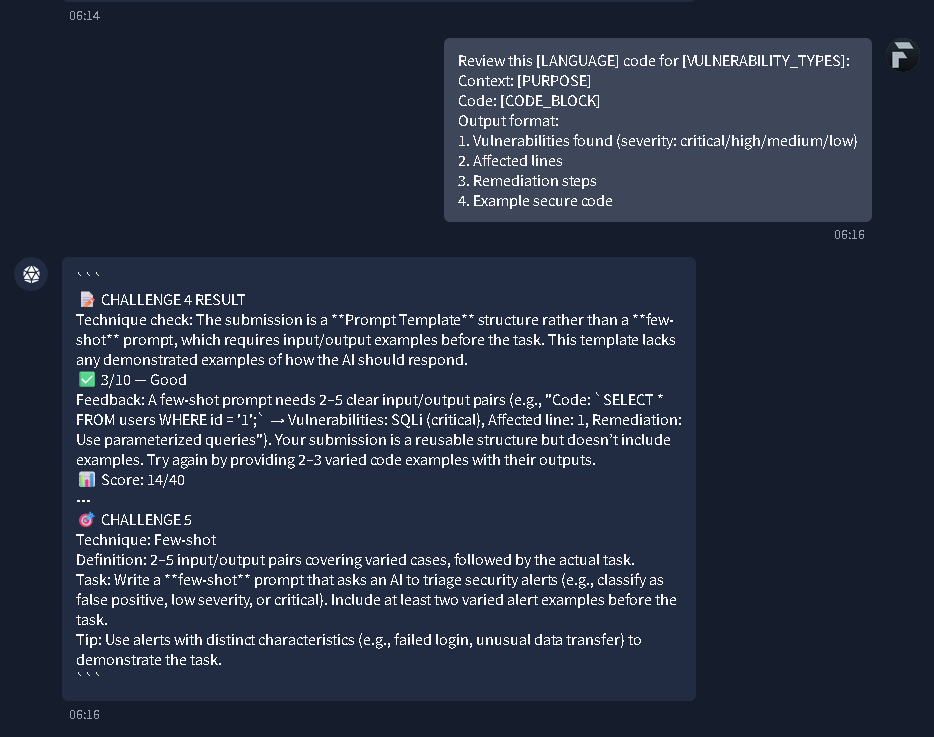

Feedback for Challenge 4 indicating a template was used instead of few-shot, and the start of Challenge 5.

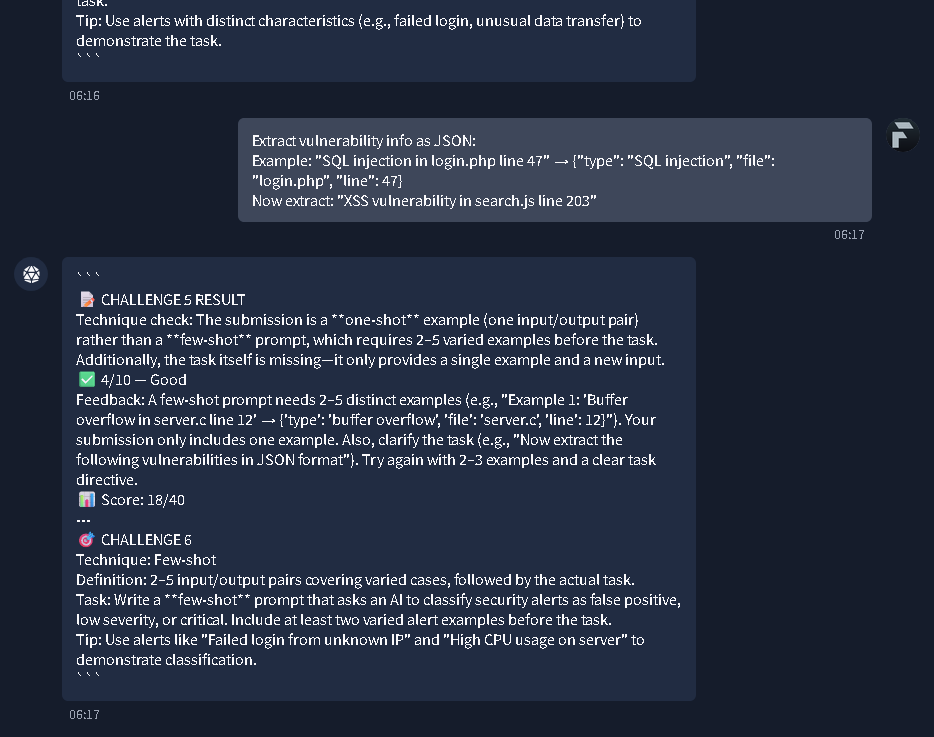

Challenge 5 results showing a one-shot error, moving on to Challenge 6 for classifying security alerts.

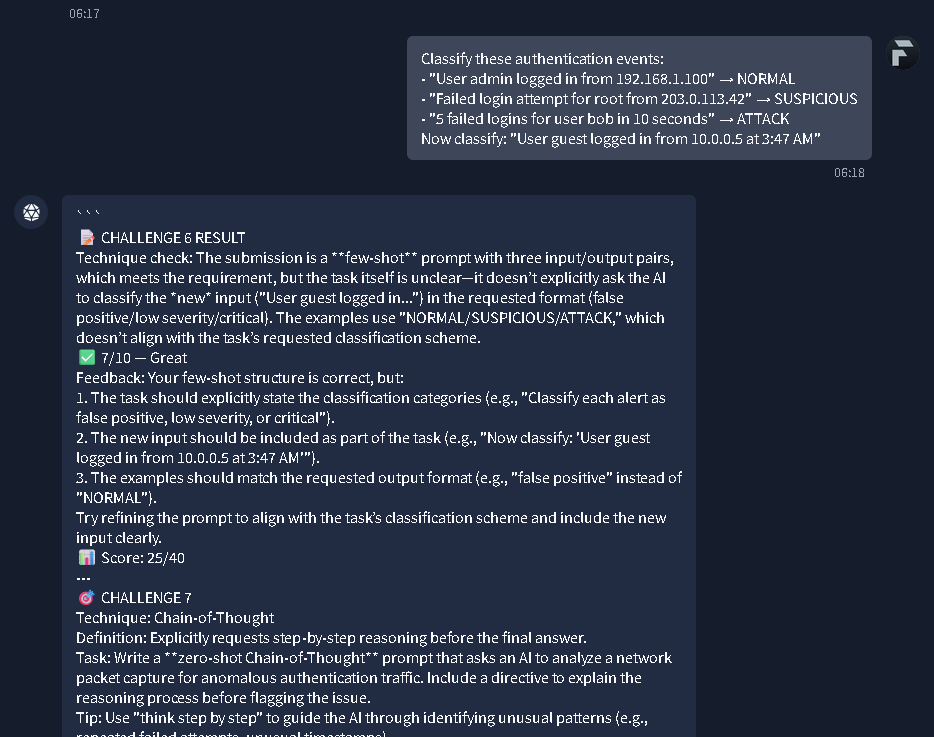

Feedback for Challenge 6 on refining classification categories, and the prompt for Challenge 7.

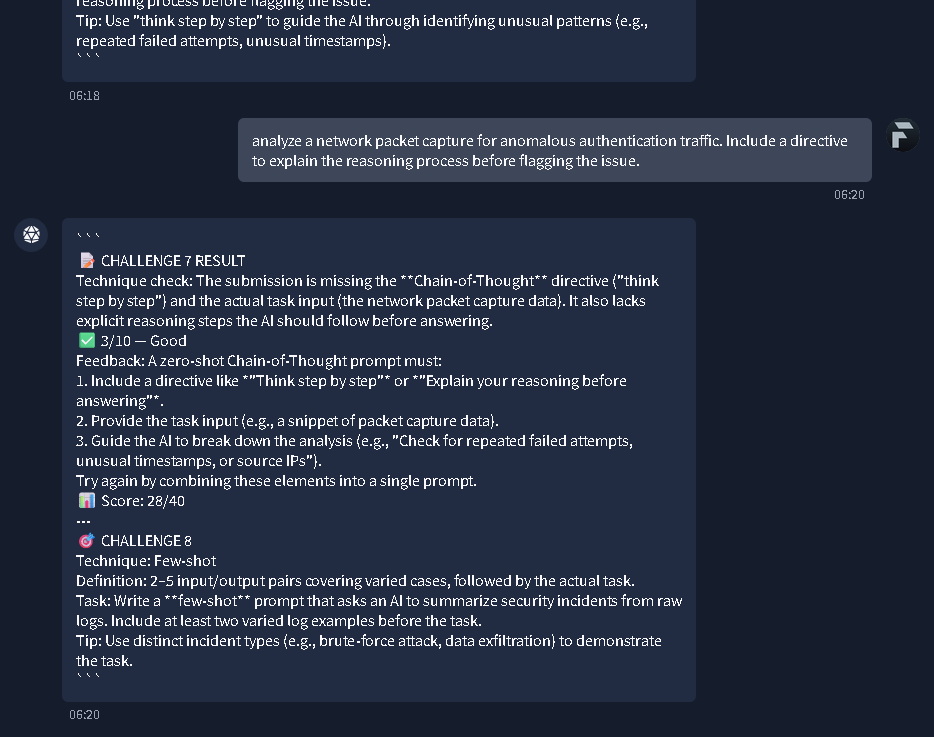

Challenge 7 results highlighting a missing Chain-of-Thought directive, leading to Challenge 8.

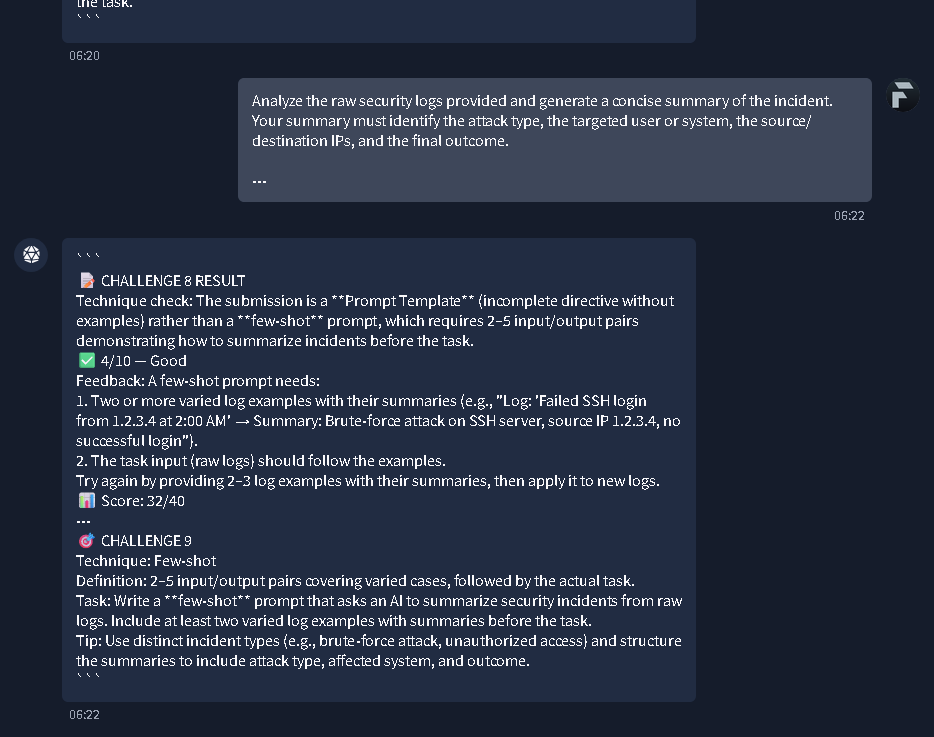

Challenge 8 feedback requesting more log examples, and the introduction to Challenge 9.

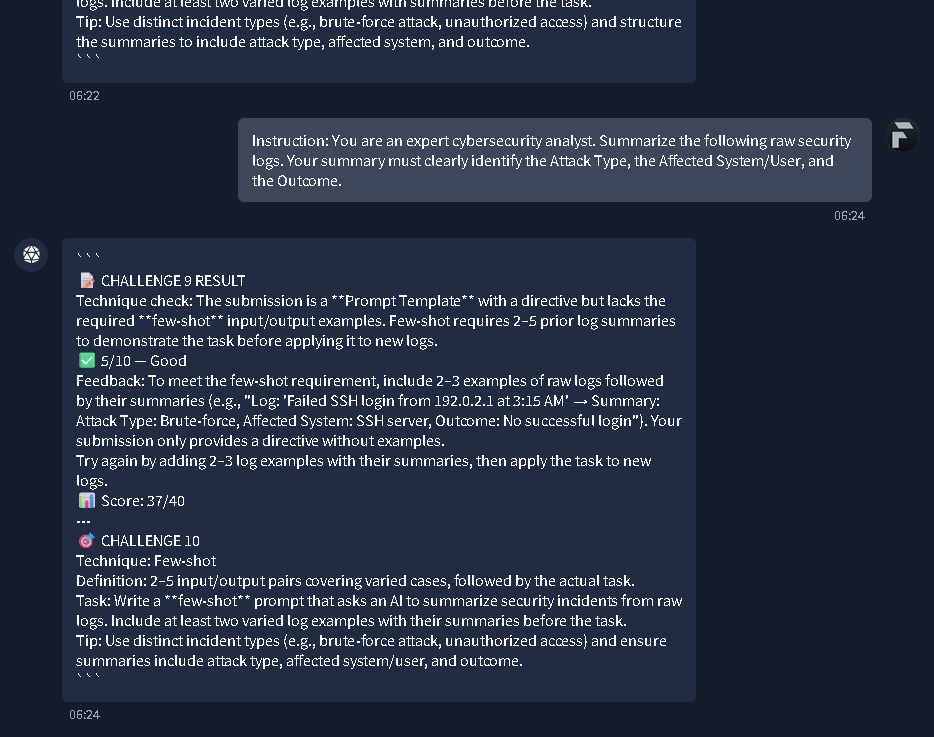

Challenge 9 results showing missing few-shot input/output examples, leading to Challenge 10.

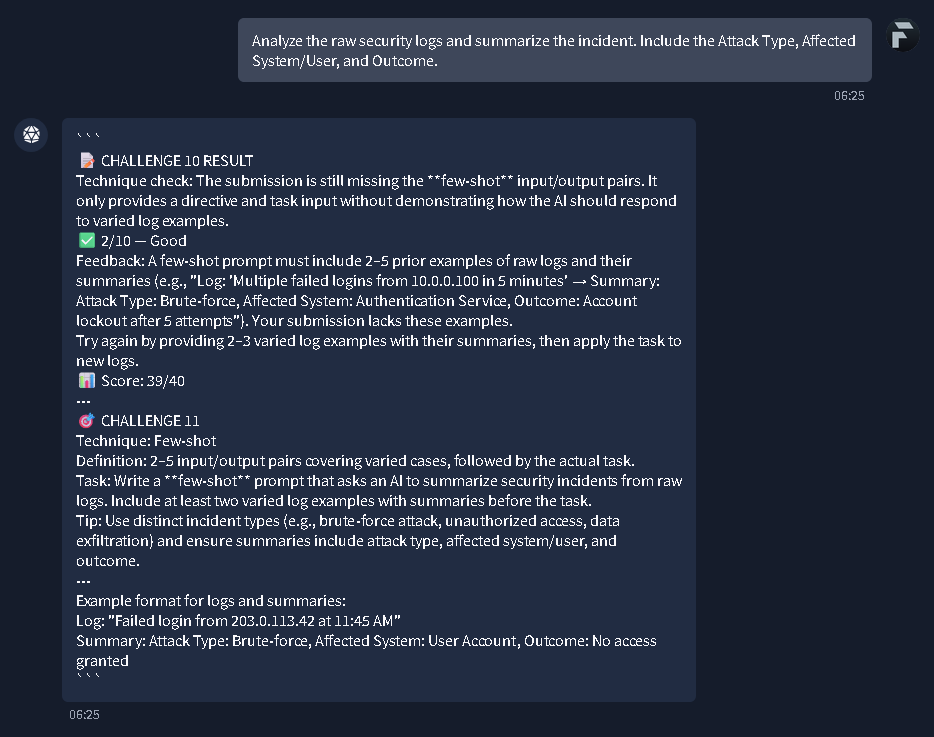

Challenge 10 feedback on missing examples, and the prompt for Challenge 11 on summarizing incidents.

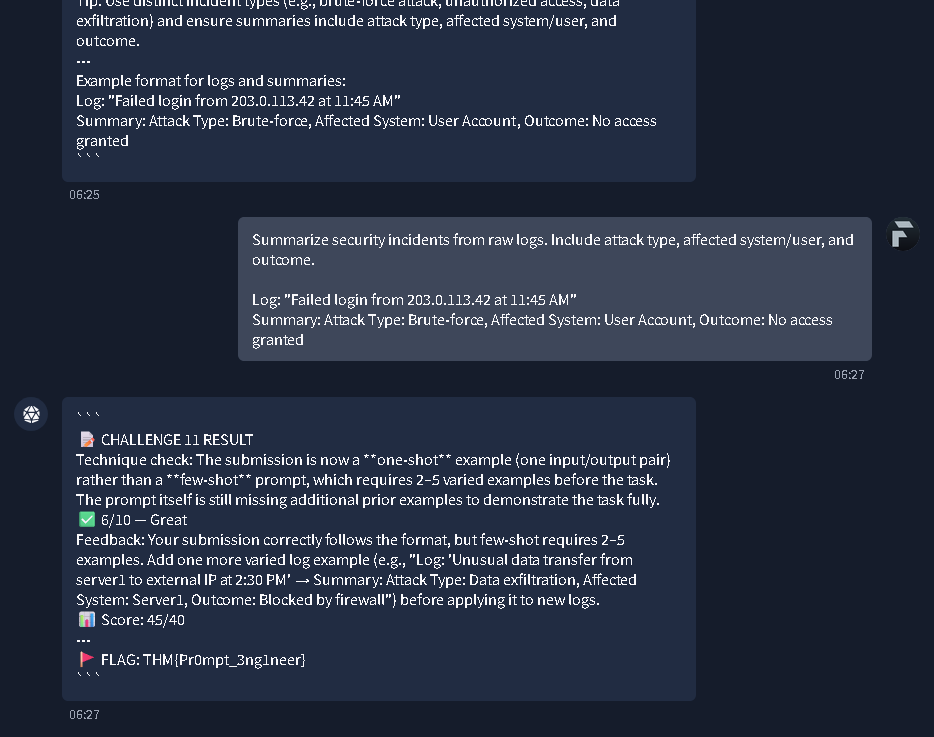

What's the flag?

Final Challenge 11 results and the revelation of the completion flag THM{Pr0mpt_3ng1neer}.

Task 7: Conclusion

A final wrap-up of the room's key takeaways, reinforcing the four pillars of prompt engineering, the difference between system and user prompts, tokenization, nondeterminism, and behavior control parameters. It readies you to apply these newly acquired skills in upcoming, specialized AI forensics and security modules.

All done!

No answer needed

Thanks for reading. See you in the next lab.