AI Models & Data | TryHackMe Write-up

Complete walkthrough for AI Models & Data TryHackme room. Explore how data is fundamental to AI security, and the models which power it.

This is my write-up for the TryHackMe room on AI Models & Data. Written in 2026, I hope this write-up helps others learn and practice cybersecurity.

Task 1: Introduction

This section introduces the foundational concept that an AI model's security risks begin with its training data. It highlights how invisible, poorly documented data supply chains can embed vulnerabilities like PII, credentials, and compromised safety mechanisms long before the model is actually deployed.

I understand the learning objectives and am ready to learn about AI models and data!

No answer needed

Task 2: Training Data

This section explores the origins of AI training data, emphasizing the heavy reliance on web scraping and the security risks associated with poor data provenance. It explains how sensitive information, such as PII and API keys, can become permanently baked into model weights, highlighting the need for an ML-BOM (Machine Learning Bill of Materials) to track and verify data sources.

What term describes the ability to answer where data came from, when it was collected, and whether it has been modified?

Data provenance

What is the name of the most widely used public corpus that underpins essentially every major model family?

Common Crawl

What is the AI equivalent of a Software Bill of Materials (SBOM), used to document dataset sources, licenses, and filtering decisions?

ML-BOM

Task 3: Building the Model

This task dives into the model-building process and its security implications. It covers how "epochs" can lead to "overfitting" (where a model memorizes sensitive training data instead of general patterns), how post-training compressions like "quantisation" can quietly degrade safety mechanisms, and the trust trade-offs inherent in decentralized "federated learning."

What term describes one complete pass of the training algorithm through the entire dataset?

Epoch

What problem occurs when a model memorises training data rather than learning general patterns?

Overfitting

What post-training optimisation technique reduces the numerical precision of model weights to cut memory and compute requirements?

Quantisation

What training approach trains a model across decentralised devices, sending only weight updates rather than raw data to a central server?

Federated learning

Task 4: The Inheritance Problem

This section explains the risks of specializing pre-trained base models. Because organizations inherit the entire base model—including its hidden biases, training data anomalies, and vulnerabilities—fine-tuning can erode safety alignments, increase the attack surface for threats like prompt injection, and obscure version-specific backdoors.

What is the process of taking a pre-trained model and continuing to train it on a smaller, task-specific dataset?

Fine-tuning

What term describes a model that has already been trained on a large general-purpose dataset?

Pre-trained model

Task 5: The Black Box Problem

Trained models are fundamentally opaque, consisting of billions of unreadable "weights" rather than auditable source code. This section highlights the "model card" as the primary documentation artifact used to understand a model's training data, intended use, limitations, and biases, acting as a crucial but often incomplete audit trail.

What documentation artifact accompanies a model to describe what it is, how it was built, and where it falls short?

Model card

What are the billions of floating-point numbers that make up a trained model collectively referred to as?

Weights

Task 6: Practical

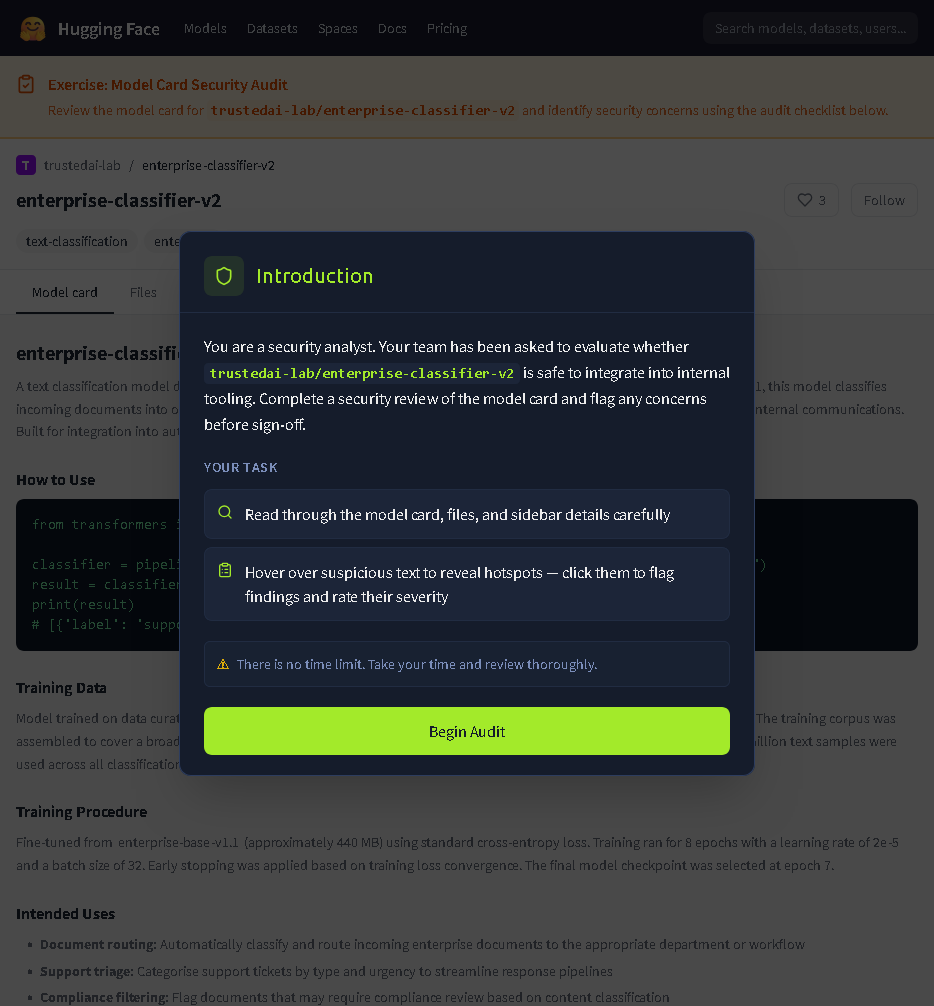

This task involves a practical exercise simulating a model repository audit (similar to platforms like HuggingFace). The goal is to hunt for security red flags in model cards, metadata, and file listings to build a practical checklist for evaluating third-party models before production deployment.

Shows the starting screen of the Model Card Security Audit exercise. It sets the stage for a security analyst to evaluate the enterprise-classifier-v2 model for potential risks before internal deployment.

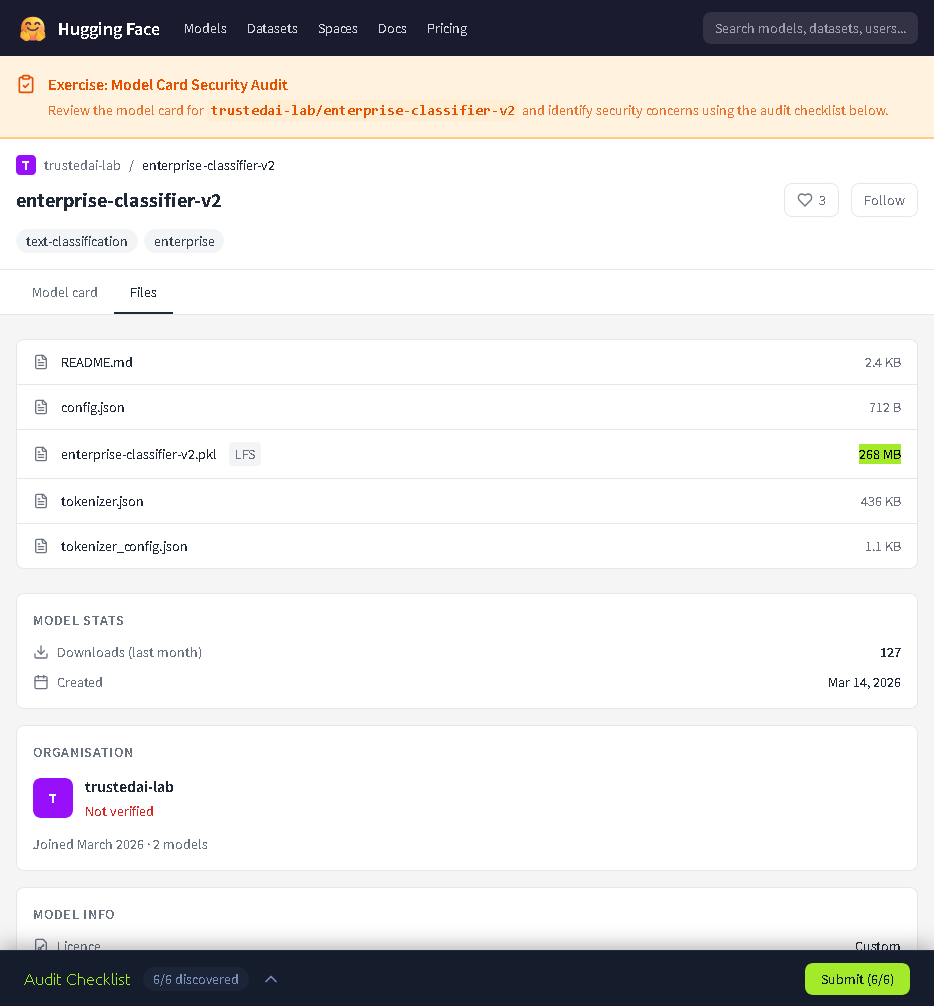

Displays the Model Card details where several security "red flags" are highlighted, such as the use of unverified web sources for training data and the lack of clear license terms.

Shows the Files tab of the model repository, listing the underlying model files and indicating that the audit is in progress with several issues already discovered.

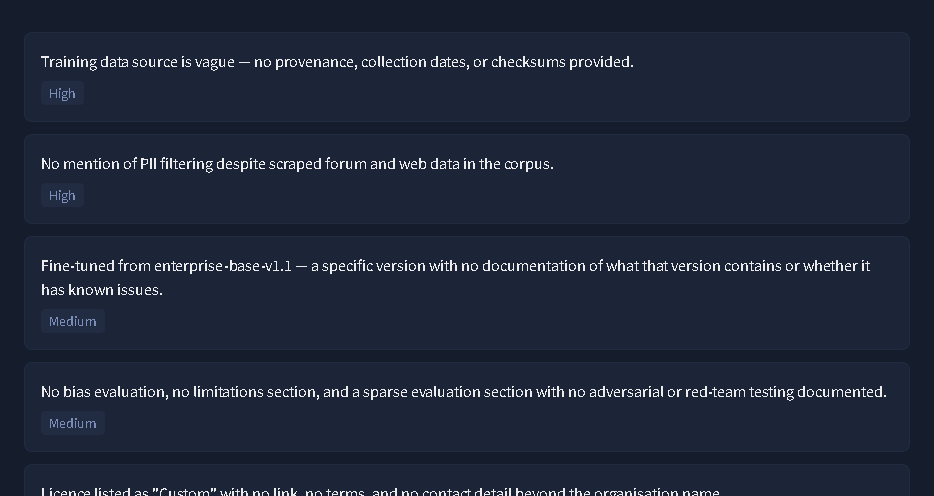

Lists specific high and medium severity Audit Findings, such as vague data provenance, the absence of PII filtering, and reliance on undocumented base model versions.

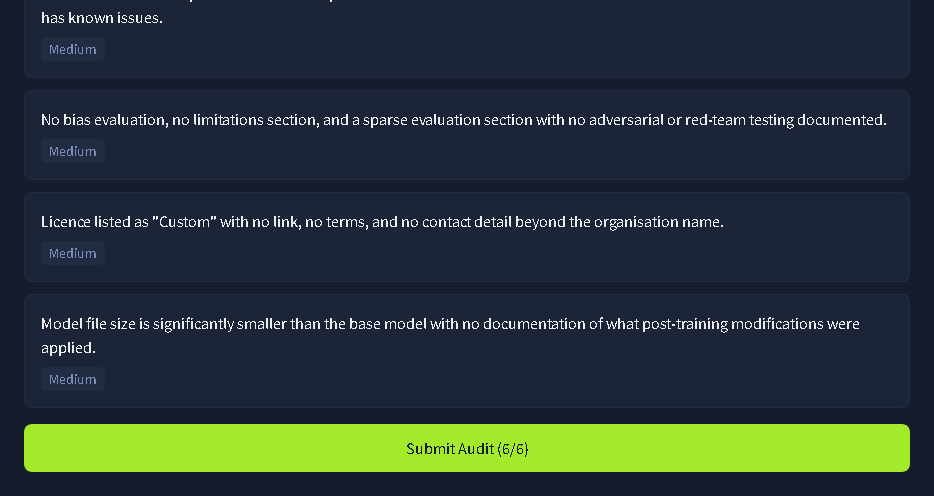

Continues the Audit Checklist, flagging the lack of bias evaluation and the suspicious reduction in model file size without documentation of post-training modifications.

Complete the exercise to get the flag!

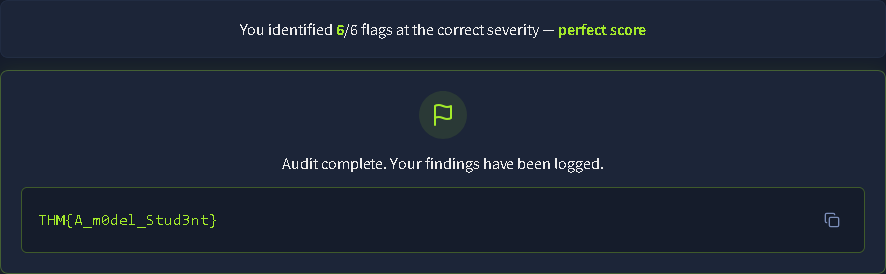

Displays the Success Screen after correctly identifying all 6 security flags, revealing the final TryHackMe completion flag.

THM{A_m0del_Stud3nt}

Task 7: Conclusion

This concluding section recaps the crucial security lessons: AI risks start with unaudited training data and baked-in PII, continue through model building choices and fine-tuning inheritance, and are compounded by the inherent "black box" opacity of trained weights and incomplete model cards.

All Done!

No answer needed

Thanks for reading. See you in the next lab.