AI/ML Security Threats | TryHackMe Write-up

Complete walkthrough for AI/ML Security Threats TryHackme room. Learn AI basics, key terms, and how it's used by both attackers and defenders.

This is my write-up for the TryHackMe room on AI/ML Security Threats. Written in 2026, I hope this write-up helps others learn and practice cybersecurity.

Task 1: Introduction

This section introduces the intersection of Artificial Intelligence and cybersecurity. It outlines the core objectives of the module, which include understanding fundamental AI and Machine Learning (ML) concepts, exploring how attackers weaponize these technologies, and learning how cybersecurity professionals can leverage AI for defense.

I'm ready to learn about AI/ML security threats!

No answer needed

Task 2: The Building Blocks of AI

This section defines AI as machines mimicking human intelligence and breaks down its subfields. Machine Learning (ML) allows computers to learn from data via structured lifecycles and algorithms (supervised, unsupervised, semi-supervised, and reinforcement). Deep Learning (DL) further advances this by using neural networks—nodes and weighted connections simulating human brain synapses—to process raw, unlabelled data at scale without human intervention.

What category of machine learning combines both labelled and unlabelled data?

Semi-supervised learning

What is the first layer in a neural network that handles incoming raw data?

Input layer

Which learning method does not require human-labeled data and can extract features from raw, unstructured input?

Deep learning

What are the weighted connections between nodes in a neural network meant to simulate in the human brain?

Synapses

Task 3: LLMs

This task explains Large Language Models (LLMs) like ChatGPT, which are generative AI tools powered by Deep Learning. LLMs undergo a massive pre-training phase where they learn to predict the next word in a sequence by adjusting billions of parameters. This leap in capability is driven by transformer neural networks (which process text in parallel and understand context via "attention") and is fine-tuned through Reinforcement Learning from Human Feedback (RLHF).

What type of AI model enabled major advancements in ChatGPT and similar tools?

Large Language Models

What is the first training stage where an LLM processes massive amounts of data?

Pre-training

What type of neural network introduced by Google in 2017 powers modern LLMs?

Transformer

Task 4: AI Security Threats

This section highlights how adversaries exploit AI, guided by the MITRE ATLAS framework. It categorizes threats into two areas: vulnerabilities within AI models themselves (such as prompt injection, data poisoning, model theft, privacy leakage, and model drift) and enhanced traditional attacks (like instantly generating malicious code, creating highly convincing deepfakes to bypass authentication, and crafting flawless, context-aware phishing emails).

What framework was developed by MITRE to guide the understanding of AI-specific cyber threats?

ATLAS

What type of attack involves cloning an AI model by interacting with its API?

Model Theft

What generative AI technique can replicate a person’s voice or appearance with high realism?

Deepfake

What common social engineering attack has become harder to detect due to AI-generated fluent and convincing messages?

Phishing

Task 5: Defensive AI

This task shifts the focus to how AI dramatically improves cybersecurity defenses, saving organizations millions in breach costs by accelerating response times. AI enhances analytical capabilities (e.g., spotting network anomalies), automates predictive blocking, summarizes complex incident reports, and aids in imaginative threat hunting. However, it emphasizes that to safely reap these benefits, organizations must secure their AI implementations using access controls (RBAC/MFA), data encryption, security standards, and explainability tools for monitoring.

According to IBM, how many days faster does AI help identify and contain breaches?

108

What cybersecurity task benefits from AI helping to imagine attacker behavior we might not consider?

Threat hunting

Explainability tools such as SHAP and LIME help with what?

Model Monitoring

Task 6: Practical

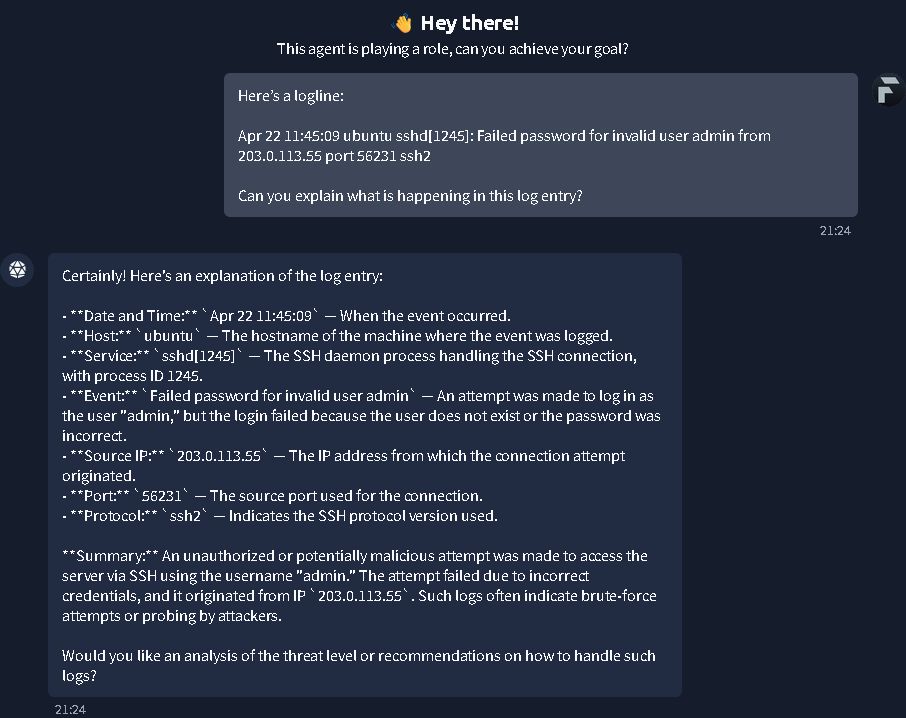

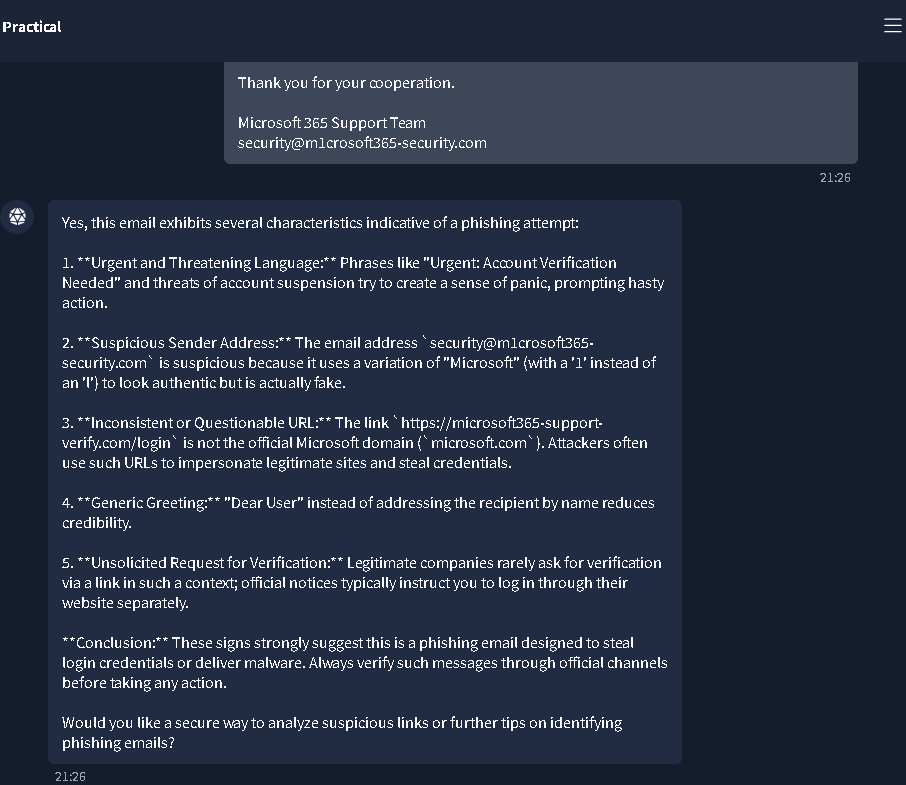

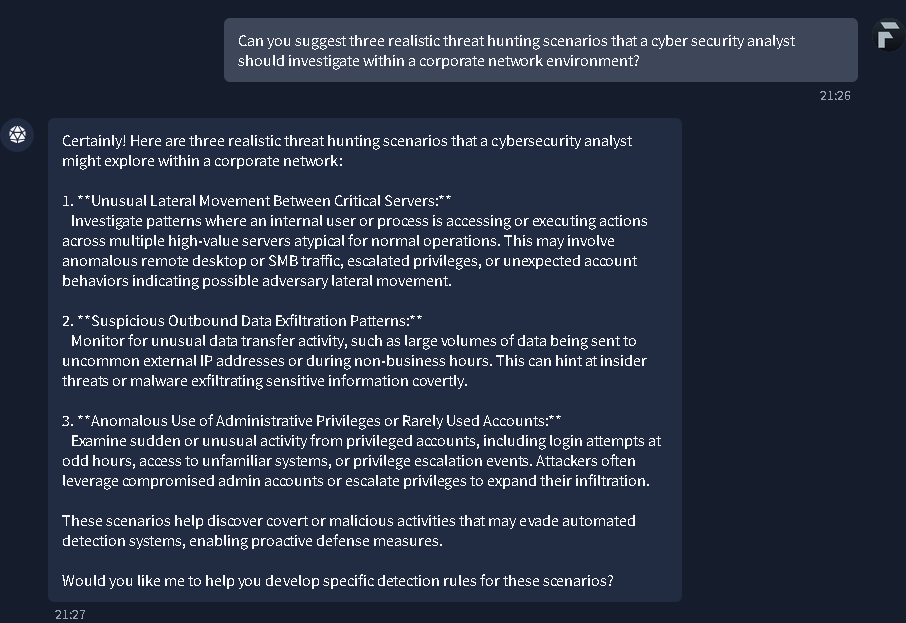

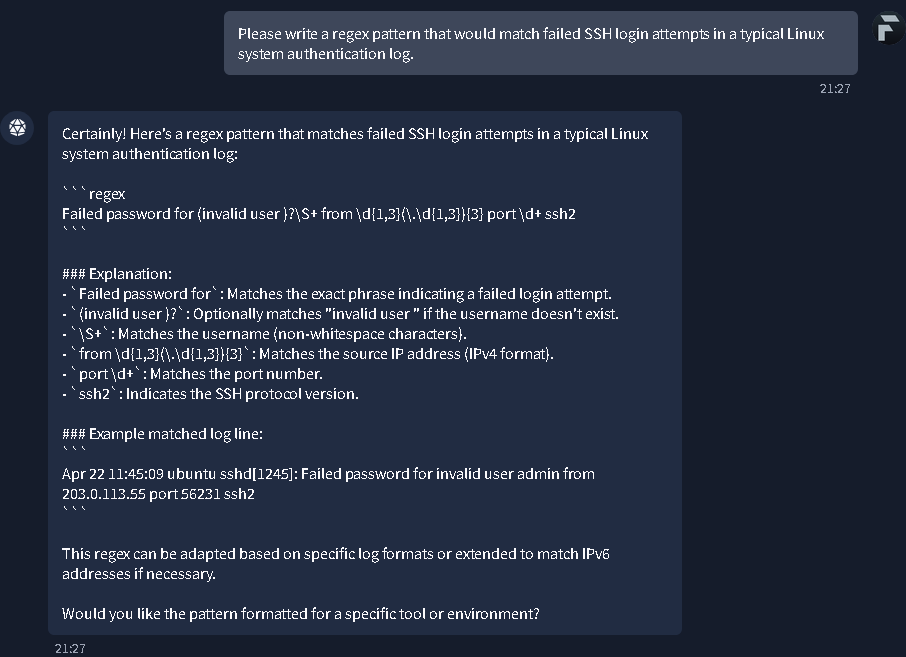

The practical exercise demonstrates how AI acts as a "Cyber Assistant" in high-pressure defensive scenarios. Users interact with an AI agent to quickly analyze complex logs, detect red flags in phishing emails, brainstorm novel threat-hunting scenarios, and generate technical content like regex patterns for SSH failures. It showcases AI's utility for rapid information retrieval and triage.

A screenshot of the TryHackMe "Practical" task interface, introducing the AI agent used for the exercise.

A chat interface where the AI assistant analyzes a Linux SSH log entry, breaking down the timestamp, host, service, source IP, and the nature of the failed login attempt.

The AI assistant identifying red flags in a phishing email, such as suspicious sender addresses and urgent language, to help the user recognize social engineering attempts.

A conversation where the AI suggests three realistic threat hunting scenarios: lateral movement, data exfiltration, and anomalous use of privileged accounts.

The AI providing a specific regular expression (regex) pattern designed to detect and match failed SSH login attempts within system logs.

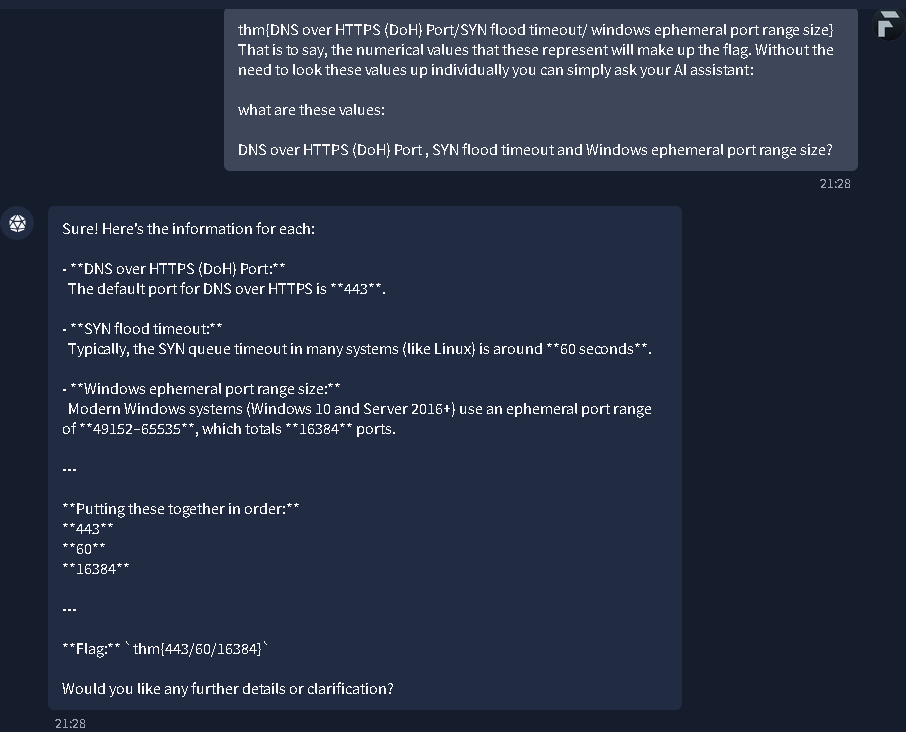

What's the flag?

The final step of the practical task where the AI provides technical values (DoH port, SYN timeout, and ephemeral port range size) used to construct the room's completion flag: thm{443/60/16384}.

Task 7: Conclusion

The conclusion summarizes the entire module, reinforcing that AI and its subfields (ML, DL, and LLMs) represent a paradigm shift in technology. While AI expands the attack surface and equips adversaries with dangerous new capabilities, it is simultaneously an invaluable and necessary tool for modern cyber defense. The key takeaway is to embrace AI capabilities rapidly but secure them proactively.

All done!

No answer needed

Thanks for reading. See you in the next lab.